Deep Learning is the subdomain part of machine learning which is inspired by the way,a brain functions.It comprises of artificial neural networks and algorithms which are inspired by how the brain learns and make decisions accordingly.With the emergence of deep learning in this era, we are now able to work on image classification, Natural language processing etc.Deep learning applications provide a helping hand to humans.In some areas,artificial neural networks even outperform human level [1] and reach upto the bayes error rate[2] which is the lowest possible error rate.

END TO END LEARNING:

After the emergence of Backpropagation algorithm deep learning is booming the industry and the research fields.Deep learning application are getting better and better each day.One of the most excited advancements in deep learning is the rise of End To End deep learning.

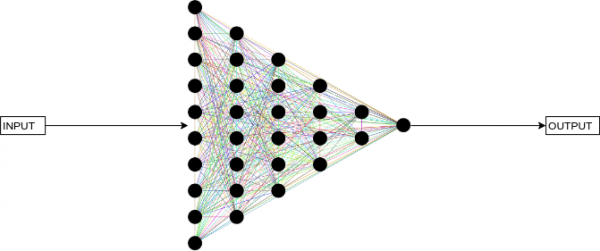

Deep learning involves the use of neural networks and hidden layers to compute the output for a given input.For the user-end the user sees the black box model of this project where he gives a input to the model and the model computes the output for it.

The user doesn’t know anything about the hidden layers and the weights assign to it.The inner computation is abstracted to the user.The hidden layers compute the value of their weight using forward and backward propagation many times.After each such sequence the output is checked with the desired output and loss is calculated.Now with every iteration,the algorithm tries to set the weight of hidden layers in such a way that loss is minimized.

These sequence of steps require lots of time and computation speed.After this tedious procedure the output is finally given out.

This is a Neural Network[3] in the very basic tone.

Now for complicated application such as speech recognition and image processing there are many neural networks involved one after the other in a series lets take a look at the diagram:

![]()

Insights of the present system

As you can see output to one network acts as an input to other.These intermediate steps are hand-designed components or intermediate steps to reach the goal.The thing to notice is that these components are designed by humans theses are the components or features through which human’s wants the machine to learn.And so the efficiency of the application also depends on the design of these components.And this may vary from person to person,for example someone can also add a new feature like context recogizationafter the word recognizationstep.So this may vary from person to person and model to model.

Emergence of end-to-end approach

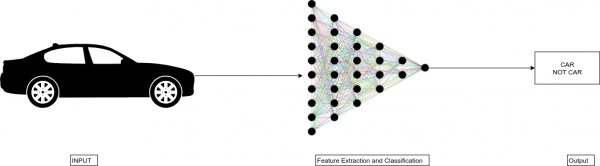

End-To-End Learning is the direct mapping the pair x & y (input & output) and hence allowing the model to learn the features by Itself this gives the model complete independence to choose the features based on itself.End to End learning lets the data speak for itself.So if you are having a huge amount of dataset mapping the input to output,you can train a big enough neural network and the network will figure out the patterns from the data.The model is given freedom rather than being forced to reflect the Human Preconceptions.

![]()

When to use end-to-end learning

As I have mentioned earlier that if you have a huge amount of data then you can use this type of learning but if you don’t have a large amount of data than it is better to go for the traditional Human Preconception inspired model.The requirement of large amount of data is necessary because the model requires large amount of mappings to find patterns and statistics of the given data.We can say that “to let the data speak it need’s a big Mouth(data)”.

Use of End-to-End learning really matters on the application for which you are making the model.As you are aware that end-to-end learning surpasses the components(as shown in the diagram),So it is possible that it may surpass features which are important as per human preconception [4].For example you are creating model for self-driving car and you use end-to-end learning for this application.You will map the position of steering with the present road situation.

In this case if you use end-to-end approach your model may not be able to train the car to follow the road signs or in case of an emergency when a pedestrian or an animal suddenly comes in front of that car,so these are some very important features which are required by the application as per human intelligence but the model may surpass these features.

So we need some human intelligence to decide when to use end-to-end approach based on the application requirements.For example you can use this learning in english to french translator where the dataset consists of many pairs of X to Y mappings.

Pros And Cons:

Pros:

> It allows the Data to speak.As It allows the model to learn and extract features from the mapping data

> Less burden to hand-design the components needed

Cons:

>As discussed It may need large amount of data

>As the model learns components/features by itself it may exclude potentially useful hand- designed components or features

Conclusion

In Conclusion we can conclude that end-to-end approach deep learning is really helpful as it allows the model to learn the components/features by itself however you need to have a large amount of mapping pairs of dataset and the sense to use this model according to the type of application as it surpasses the human preconception components and features to learn itself.

Reference

[1]https://venturebeat.com/2017/12/08/6-areas-where-artificial-neural-networks-outperform- humans/

[2] K.Tumer, K. (1996) “Estimating the Bayes error rate through classifier combining” in Proceedings of the 13th International Conference on Pattern Recognition, Volume 2, 695–699

[3] Hopfield, J. J. (1982). “Neural networks and physical systems with emergent collective computational abilities”. Proc. Natl. Acad. Sci. U.S.A.(8): 2554–2558.

[4]Andrew Ng, https://www.coursera.org/learn/machine-learning-projects/lecture/H56eb/whether-to-use-end-to-end-deep-learning